https//solana.io

One of the most difficult problems in distributed systems is agreement on time. Google’s Spanner uses synchronized atomic clocks between its datacenters. Google’s engineers synchronize these clocks to a very high percision and constantly maintain them.

This problem is even harder in adversarial systems like blockchain. Nodes in the network can’t trust an external source of time or any timestamp that appears in a message. Hashgraph for example, solves this problem with a “median” timestamp. Each message that is seen by the network is signed and timestamped by a supermajority of the network. The median timestamp for the message is what Hashgraph calls “fair” ordering. Each message has to travel to the supermajority of the nodes in the system, then after the message collects enough signatures, the entire set needs to be propagated to the entire network. As you can imagine, this is really slow.

What if you could simply trust the timestamp that is encoded into the message? An enourmous wealth of distributed systems optimizations would suddenly be at your disposal:

- Liskov, B. Practical uses of synchronized clocks in distributed systems

Proof of History

What if instead of trusting the timestamp you could prove that the message occured sometime before and after an event? When you take a photograph with the cover of New York Times, you are creating a proof that your photograph was taken after that newspaper was published, or you have some way to influence what New York Times publishes. With Proof of History, you can create a historical record that prooves that an event occured at a specific moment in time.

Proof of History Timestamps

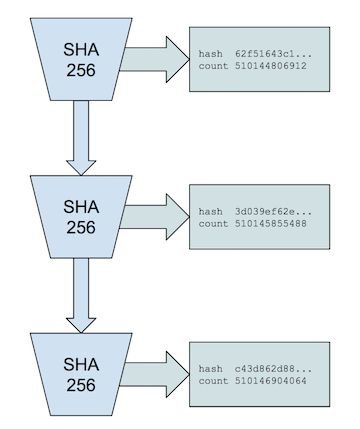

The Proof of History is a Verifiable Delay Function. Our specific implementation uses a sequential pre-image resistant hash that runs over itself continuously with the previous output used as the next input. Periodically the count and the current output are recorded.

For a SHA256 hash function, this process is impossible to parallelize without a brute force attack using 2¹²⁸ cores.

We can then be certain that real time has passed between each counter as it was generated, and that the recorded order of each counter is the same as it was in real time.

Upper bound on Time

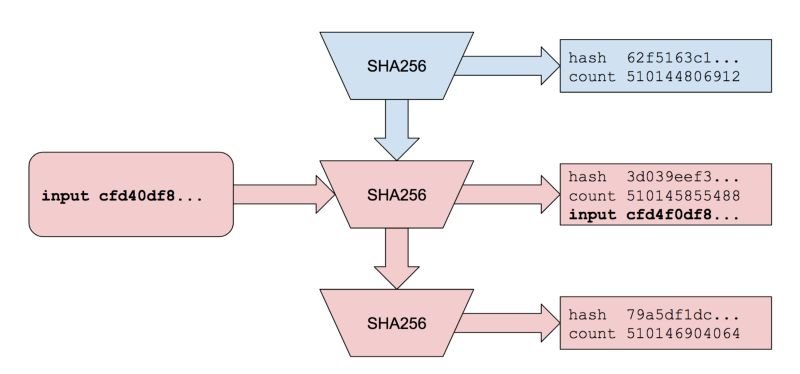

Recording messages into a Proof of History sequence

Data can be inserted into the sequence by appending the data to the previous generated state. The state, input data, and count are all published. Appending the input causes all future output to change unpredictably. It is still impossible to parallelize, and as long as hash function is pre-image and collision resistant, it is impossible to create an input that would generate a desired hash in the future, or create an alternative history with the same hashes. We can prove that time passed between any two append operations. We can prove that the data was created sometime before it was appended. Just like we know that the events published in the New York Times occured before the newspaper was written.

Lower bound on Time

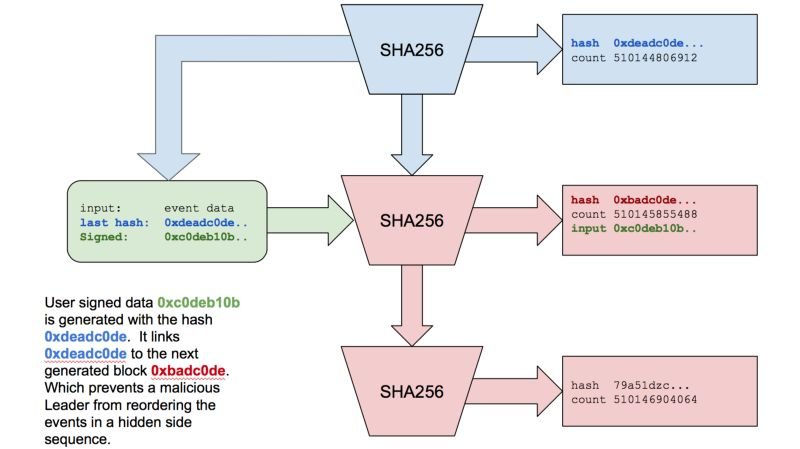

Lower bound on time with Proof of History

Inputs into Proof of History can have references back to Proof of History itself. The back reference could be inserted as part of a signed message with the users signature, so it cannot be modified without the users private key. This is just like taking a photograph with the New York Times newspaper in the background. Because this message contains 0xdeadc0de hash we know it was generated after count 510144806912 was created.

But since the message is also inserted back into Proof of History stream, it is as if you took a photo with the New York Times in the background, and the next day the New York Times published that photo. We know that the content of that photo existed before and after a specific day.

Verification

While the recorded sequence can only be generated on a single CPU core, the output can be verified in parallel.

Parallel Verification

Each recorded slice can be verified from start to finish on separate cores in 1/(number of cores) time it took to generate. So a modern day GPU with 4000 cores can verify a second in 0.25 milliseconds.

ASICS

Isn’t every CPU different, and some much faster then others? How do you actually trust that “time” as it’s generated by our SHA256 loop is accurate?

This topic deserves its own article, but long story short is that we don’t much care if some CPUs are faster then others, and if an ASIC can be faster then the CPUs available to the network. The most important thing is that there is a finite bound on how much faster an ASIC can get.

We are using SHA256, and thanks to Bitcoin there has been significant research in making this cryptographic hash function fast. This function is impossible to speed up by using a larger die area, like a Look Up Table, or unrolling it without impact to clock speed. Both Intel and AMD are releasing consumer chips that can do a full round of SHA256 in 1.75 cycles.

Because of this, we have pretty good certainty that a custom ASIC will not be 100x faster, let alone 1000x, and most likey will be within 30% of what is available to the network. We can construct protocols that exploit this bound and only allow an attacker a very limited, easily detected and shortlived oportunity for a denial of service attack. More on that in the next article!

Code

solana — High Performance Blockchaingithub.com

If you like rust and cuda, and want to build the worlds fastest blockchain please reach out to us hello@solana.io